Getting started with AWS: Guide for developers

Amazon Web Services is one of the largest cloud platform providers out there. If you’ve never used it, all the acronyms and service names can make your head spin.

The goal for this post is to help you understand basic concepts and services in Amazon Web Services, and how to use them to build and host your own application.

Amazon provides an informative website and a web control panel for managing AWS services: The AWS Management Console.

Basic Concepts

Amazon Web Services, being a virtualized cloud platform, abstracts the physical infrastructure management away from you. While this is a good thing (unless you happen to like cold halls with lots of flashing lights and a deafening whirr), you will need to know about two basic concepts:

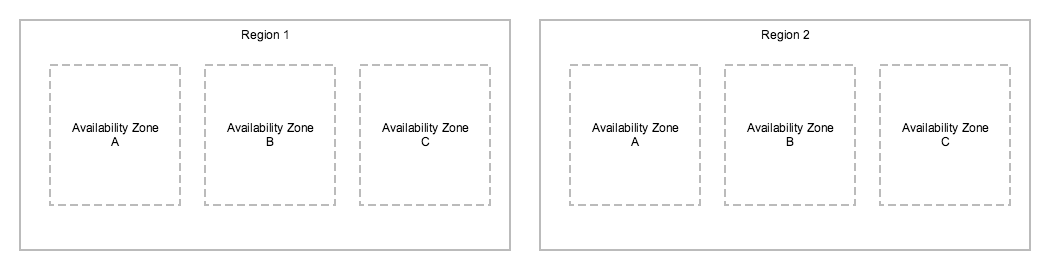

Regions

Amazon operates multiple regions worldwide, in separate geographical locations. Currently a total of 15 of regions are available for public use. While all AWS services are not supported on all regions, generally the most common services are available everywhere. By running your services in a region close to your clients, you are able to provide fast connectivity to your services, and control whereabouts in the world your data resides.

Availability Zones

Each region in AWS consists of multiple availability zones. These are isolated locations with their own essential network, power and datacenter infrastructure. By splitting your application deployment between availability zones, you greatly increase the chances of it staying up and running even in case of faults.

AWS services

Amazon Web Services offers a plethora of services (a total of 94 separate services at the time of writing) to meet your needs.

Next we’ll go through some of the most common ones.

EC2: Elastic Compute Cloud

EC2 is the basic building block of services hosted in AWS. An EC2 instance is a virtualized server on the cloud. You can select between Linux and Windows instances, and a multitude of instance types ranging from low-performance (and inexpensive) to high-performance (and yes, expensive). The instance types are also optimised for different workloads - if the applications you run are particularly demanding on CPU or memory, you will be able to choose a suitable instance type to support it.

In their simplest form, every EC2 instance gets a public IP address. AWS provides you with credentials to log in, and after you’ve configured the Security Group (a firewall defining what traffic can reach your instance), you’re all set to log in and start configuring your server.

Every EC2 instance is started from an AMI (Amazon Machine Image), which is an image of a preconfigured operating system. There are official AMIs for most common Linux distributions (Ubuntu, Debian, RHEL, CentOS) and Windows versions. There are also lots of community-provided AMIs with preconfigured software built on top of the operating system images. You’ll be able to find an AMI with a preconfigured LAMP environment, for example.

EC2 instances store data either on instance storage or EBS volumes. Instance storage is temporary - it gets emptied when your server is stopped. EBS (or Elastic Block Storage) is a dedicated volume that is persistent. You can attach multiple EBS volumes to an instance, and they can be modified on the fly.

S3: Simple Storage Service

Amazon S3 is another commonly used storage service in AWS. It is fundamentally an object storage service that’s often used to store files in a flat structure inside buckets.

The flat structure means there is no notion of directories. Instead, every object has a prefix: an example path to an object on S3 could be s3://my-s3-bucket/backup/2017/02/18/mongo.tgz. Many of the operations you can do on S3 can be made per prefix, essentially simulating a directory-like filesystem.

S3 can be accessed via an API programmatically, or using an S3 client. AWS also provides a graphical S3 management panel inside the AWS Management Console.

Amazon S3 is much cheaper than EBS, so it is more suitable for long-term storage of data. It also provides multiple levels of storage reliability and access frequency, so you can save even more money storing objects you need infrequently (backups) or if you can live with the risk of losing one in ten thousand objects (backups).

RDS: Relational Database Service

RDS is the Amazon-hosted relational database service. It provides you with a choice of six database engines (MySQL, PostgreSQL, MariaDB, Microsoft SQL server, Oracle and Amazon Aurora).

RDS enables easy provisioning and maintenance of database servers, including scaling and backups. This takes many of the tedious tasks of managing databases off your hands.

When provisioning RDS servers, Amazon provides you with an endpoint your applications will use - just like using a self-managed database server. Access to RDS servers is also configured via Security Groups, just like EC2 instances.

To develop with RDS in mind, run the same database engine for development purposes locally. Make sure you can configure your application to use a different database address (and access credentials) when you run it in AWS, and deployment should be a breeze.

VPC: Virtual Private Cloud

Virtual Private Cloud is a way to create networks inside Amazon Web Services. As all modern EC2 instances run inside a VPC (traditionally this was not the case), you can configure them to be able to access eachother with internal IP addresses. This gets around AWS data transfer fees, makes connections faster and adds security (it usually doesn’t make sense to expose for example a database server publicly).

You can use a VPN tunnel to connect to a VPC from your own computer.

Try it out: The Free Tier

Amazon offers a Free Tier for new AWS accounts. For 12 months, you’ll be able to use most of the services free of charge. You will still have to provide a valid credit card for signing up, but if you stay within the free tier limits, you won’t get charged. A word of warning, though: there are no flashing lights or dinging bells when you exceed the free tier limits, so I suggest you keep an eye on the billing page to avoid unexpected charges. You can also set up a billing alert.

Costs

As AWS is built on the idea of Pay What You Use, you are able to host small services for very little money, or even free with the free tier.

It’s good, however, to have an idea what generally end up being the largest costs with stacks running on AWS.

With the clients I’ve worked with, the AWS bill generally consists of the following, in more or less that order:

- EC2 instance fees, including EBS volumes

- RDS database fees

- Data transfer (out of AWS)

- S3 fees

But, there’s a catch

Most of the services AWS provides are meant to make your life easier by providing functionality that you otherwise would have to build and maintain yourself (for example, queueing with SQS). This is generally a good thing: it’s very quick to get started and build beautiful things.

But, by using AWS provided services extensively, you are essentially locking yourself in with a single vendor. It’s up to you to decide if that’s a problem or not.

Personally, I think that’s a Happy Problem. While AWS is not the cheapest cloud provider around, if I’m ever in a situation where one of my services hits millions of users and becomes costly, I’ll gladly think of ways around it.

Releaseworks Academy has a free online training course on Docker & Jenkins best practices: https://www.releaseworksacademy.com/courses/best-practices-docker-jenkins